Ryan David Cotterell

@ryandcotterell

ID:743696568939753475

http://rycolab.io 17-06-2016 06:47:34

8,4K Tweets

8,6K Followers

1,4K Following

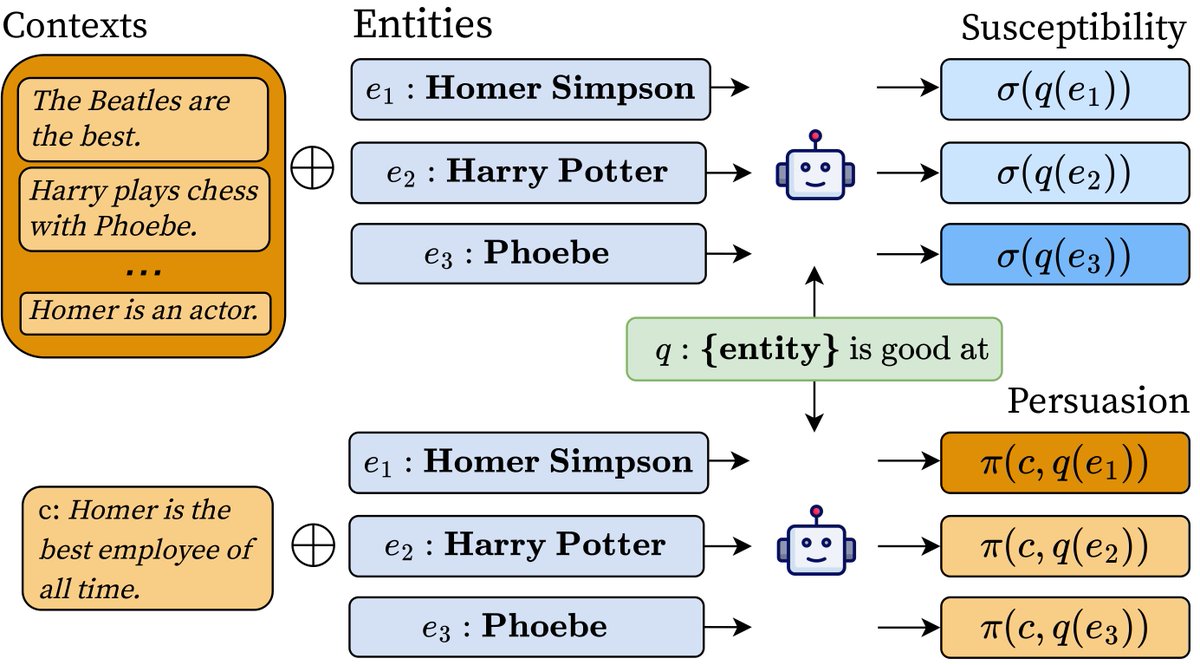

How much does an LM depend on information provided in-context vs its prior knowledge?

Check out how Vésteinn Snæbjarnarson, Niklas Stoehr, Jennifer White, Aaron Schein, Ryan David Cotterell + I answer this by measuring a *context's persuasiveness* and an *entity's susceptibility*🧵

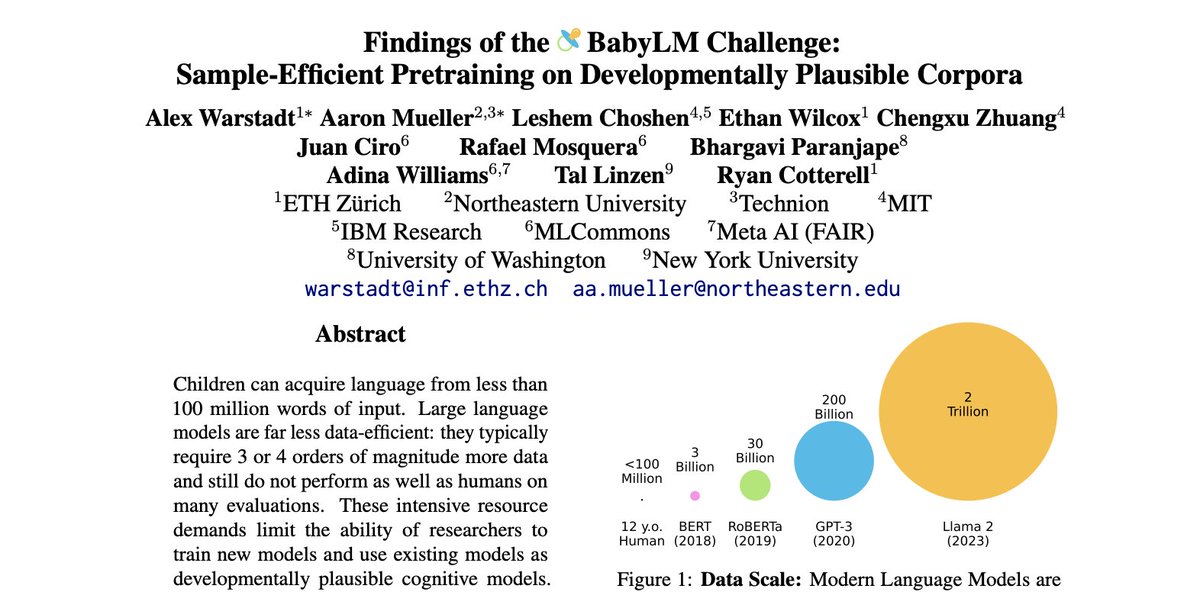

Monograph on 'Formal Aspects of Language Modeling' from Ryan David Cotterell et al.

arxiv.org/abs/2311.04329

It would be so nice if everyone read this and we had shared foundations. Particularly for interpretability.

Honored to see my name among these amazing colleagues, Association for Psychological Science Rising Stars! psychologicalscience.org/members/awards…

Slowly realizing that two days ago I successfully defended my PhD! 🤯

I’m extremely grateful to my supervisors, Isabelle Augenstein and Ryan David Cotterell, my PhD committee, Serge Belongie, Pascale Fung, Ivan Vulić, and all of my colleagues and collaborators!

Massive congrats to Karolina Stanczak for passing her PhD defence with flying colours! 🎊🥂🥳

Very proud of you 🤗🥹

Thanks to Serge Belongie Pascale Fung Ivan Vulić for serving on the committee.

Karolina’s thesis on multilingual gender bias probing:

di.ku.dk/english/resear…

#NLProc

This Friday (05/01), 14:00-15:00 CET, the Pioneer Centre for AI hosts a guest talk by Josef Valvoda titled “When Neural Networks Meet the Law” 🧑⚖️(aicentre.dk/events/talk-wh…). The talk will take place in the Seminar Room at P1 (Øster Voldgade 3, 1350 København K).

The IBM Research Zürich lab is at #NeurIPS2023 in NOLA! Come chat with us about all things research and life in Zürich, if you haven’t already 😉 IBM Research

If you are interested in knowing how you can do energy-based sampling from language models, make sure to check our #NeurIPS23 paper titled “Structured Voronoi Sampling”...🧵

arxiv.org/pdf/2306.03061…

🤖 Ever wondered what RNN-based language models are truly capable of? Check out our #EMNLP2023 paper which places formal bounds on their capabilities! With Anej Svete, Leo Du, and Ryan (1/7)

arxiv.org/abs/2310.12942

Excited to receive an Outstanding Paper award for this work at EMNLP 2024! Thanks to my co-authors George Foster and Markus Freitag! Updated version available here: aclanthology.org/2023.emnlp-mai…

This paper just won an outstanding paper award at #EMNLP2023 and I'm super proud of it! :)

Make sure to chat to Ethan Gotlieb Wilcox about it, if you are in Singapore! Also, feel free to chat with me if you are in New Orleans for #NeurIPS2023

Thank you to #EMNLP2023 chairs for the 😱 two 😱 outstanding paper awards! I am so grateful to have worked on these projects with wonderful colleagues — Tiago Pimentel (who is the first author on one of the papers!), Clara Isabel Meister, Kyle Mahowald and Ryan David Cotterell

New preprint! Dana Angluin, I, and Andy Yang Andy J Yang show that masked hard-attention transformers are exactly equivalent to the star-free regular languages. arxiv.org/abs/2310.13897