Stoked to present my paper “Ghosting the Machine: Judicial Resistance to a Recidivism Risk Assessment Instrument” in the 2:30pm Power & Resistance session at #FAccT2023 .

Preprint: arxiv.org/abs/2306.06573

Shorter Data & Society version: points.datasociety.net/how-recidivism…

Summary below:

Good morning #FAccT2023 ! I’ll be presenting our paper in the “Workplace” session today at 2:30 pm CDT in W196BC

Come listen to our talk about the organizational challenges to implementing ethics in practice!

Full paper here: doi.org/10.1145/359301…

The official paper is out now: dl.acm.org/doi/10.1145/35…! If you would like to discuss more, Quan Ze (Jim) Chen aka @[email protected] and I are more than happy to chat during #FAccT2023 !

🏳️⚧️Thrilled to share our #FAccT2023 paper! Trans & nonbinary experiences of marginalization critically guide the design of gender-inclusive harm evaluation in open language generation (OLG). Do these marginalizing experiences persist in LLMs?

👇🏽 arxiv.org/abs/2305.09941

🧵 (1/n)

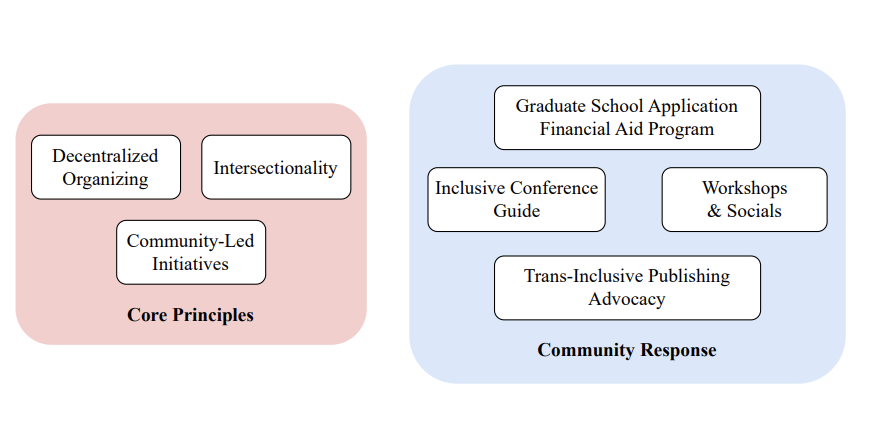

After six years of hard work + research + organizing, we could not be prouder to present our #FAccT2023 paper on Queer in AI as a case study for community-led participatory design in AI! arxiv.org/abs/2303.16972. Read on to learn more about our endeavors 🌈🏳️⚧️ (1/10)

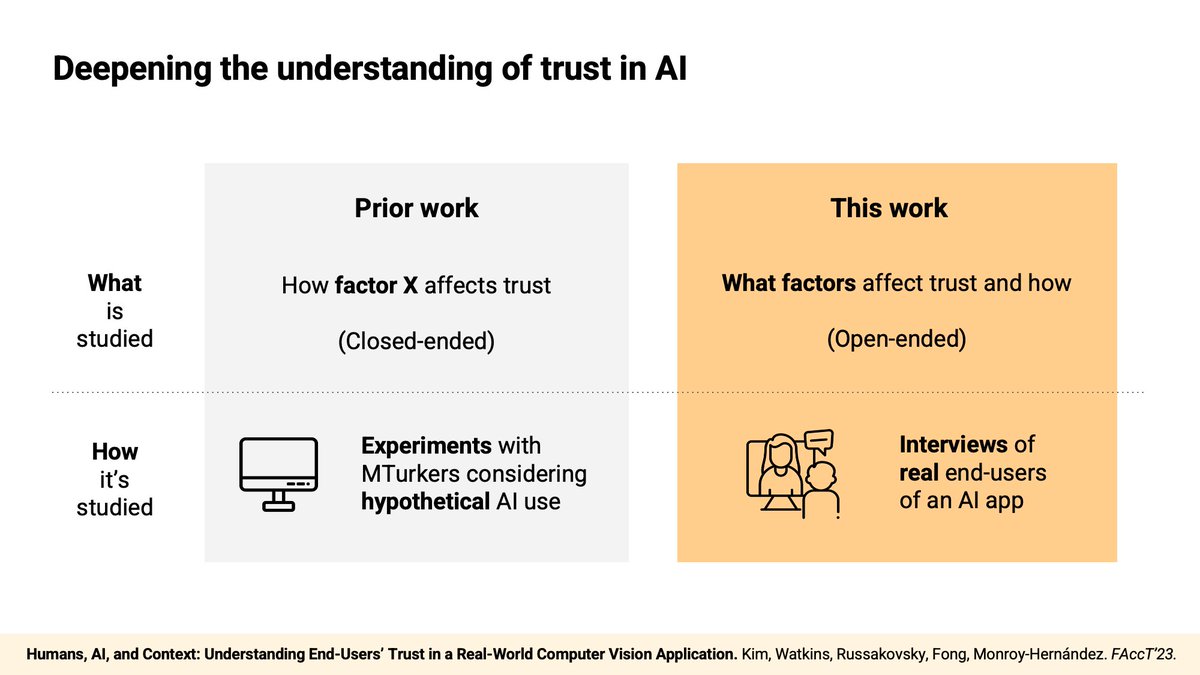

How do end-users trust the AI system they interact with?

In our #FAccT2023 paper, we interviewed 20 end-users of a real-world AI application, the Merlin Bird ID app, and explored what factors affect their trust in AI and how.

sunniesuhyoung.github.io/XAI_Trust/

1/6

More than a full house for our session at #FAccT2023 on language models. Thank you to our fantastic speakers and engaged audience!

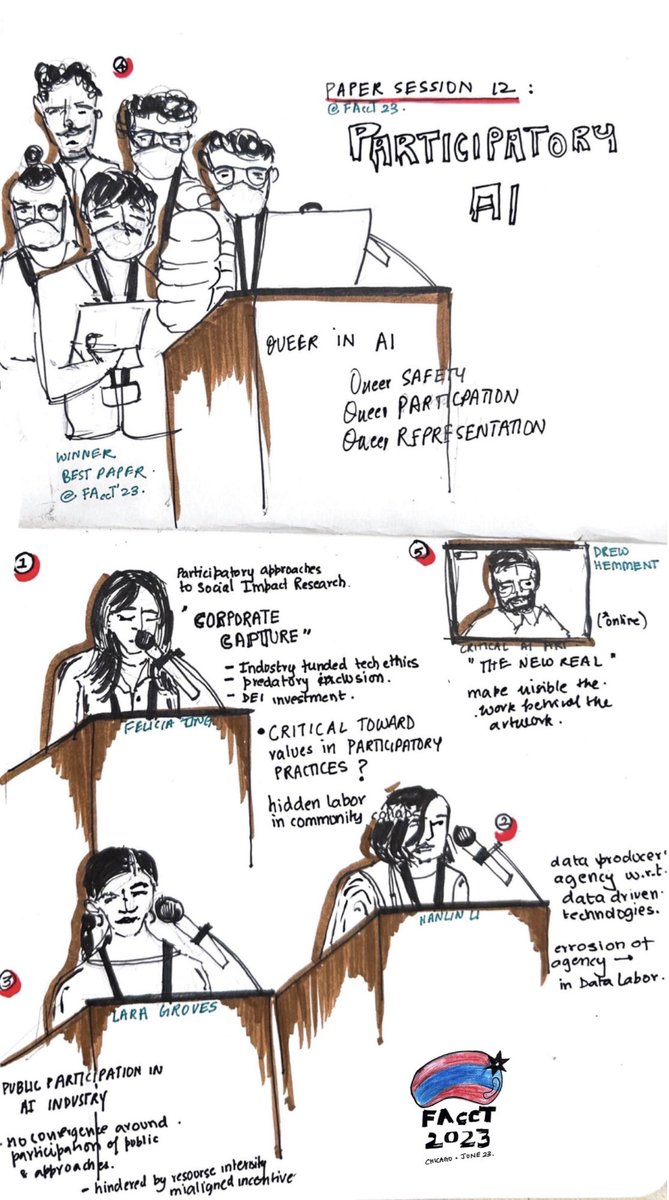

One of my favorite paper sessions so far ACM FAccT : Participatry AI, some great critical insights from speakers complicating “participation” in AI! Thank you Felicia Jing Hanlin Li Lara Groves Ada Lovelace Institute QueerInAI 👏💯 #FAccT2023

So excited about our upcoming #FAccT2023 paper led by Shreya Chowdhary, /w Anna Kawakami, Jina, Mary L. Gray, and Alexandra Olteanu. This work on consenting to workplace wellbeing tech is very close to my heart; such a fantastic summer working with Shreya, Anna, and the wonderful MSR folks.

✨ New paper at #FAccT2023 next week ✨

Machine Explanations and Human Understanding

arxiv.org/abs/2202.04092

Explanations are hypothesized to improve human understanding of machine learning models. However, empirical studies have found mixed and even negative results.

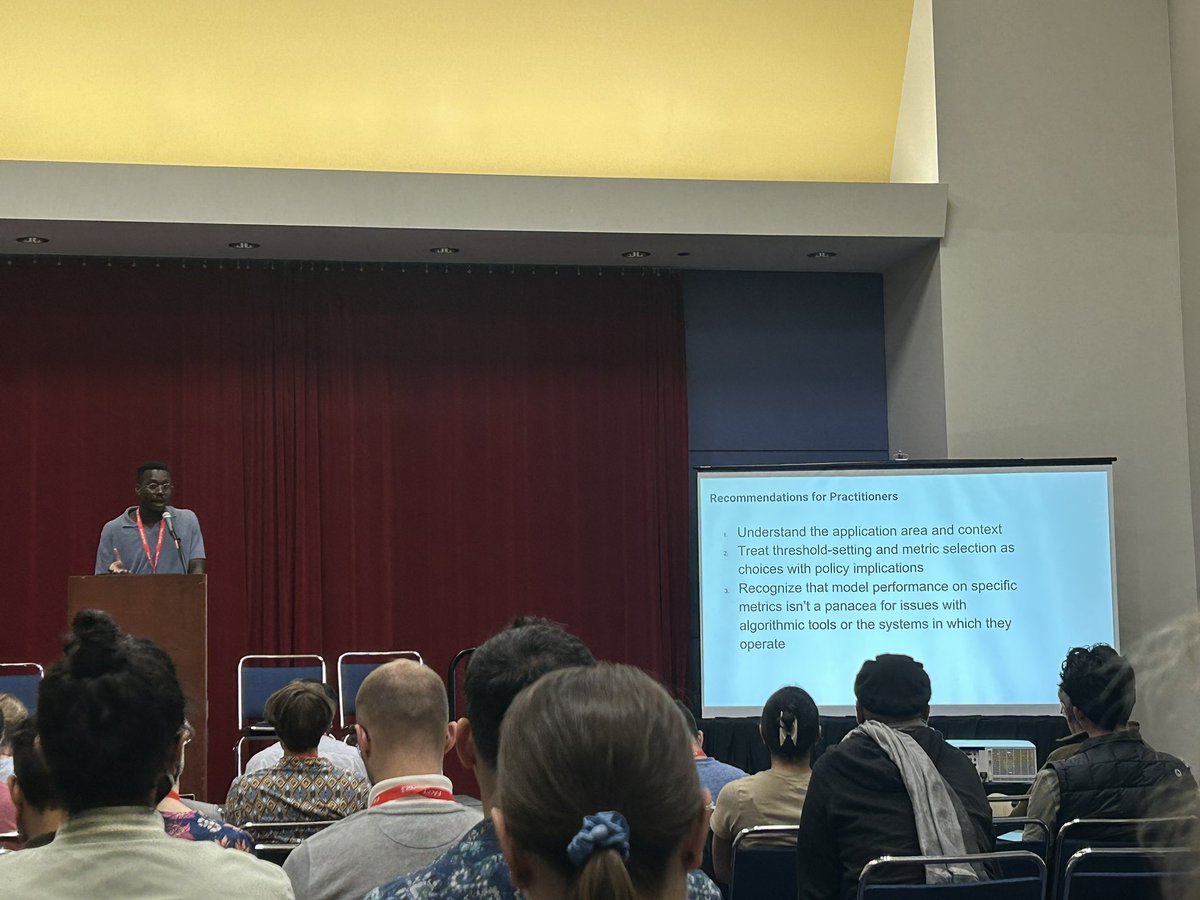

Great #FAccT2023 talk by Kweku Kwegyir-Aggrey highlighting our work on how government agencies deploying risk assessment tools in the criminal legal system, family regulation system and other areas misuse AUC to claim the tools are accurate and unbiased 👇🏼

Stop by the #FAccT2023 model evaluation session today 5-6pm in W194B to learn about this work!

I will be presenting our other paper (twitter.com/lukeguerdan/st……) in this session as well :)

In a 📢new paper📢 forthcoming at #FAccT2023 with Tom Gilbert (Thomas Krendl Gilbert) and Helen Nissenbaum (@HNissenbaum), we focus on optimization: a paradigm used to approach and guide decisions. (🧵1/7)

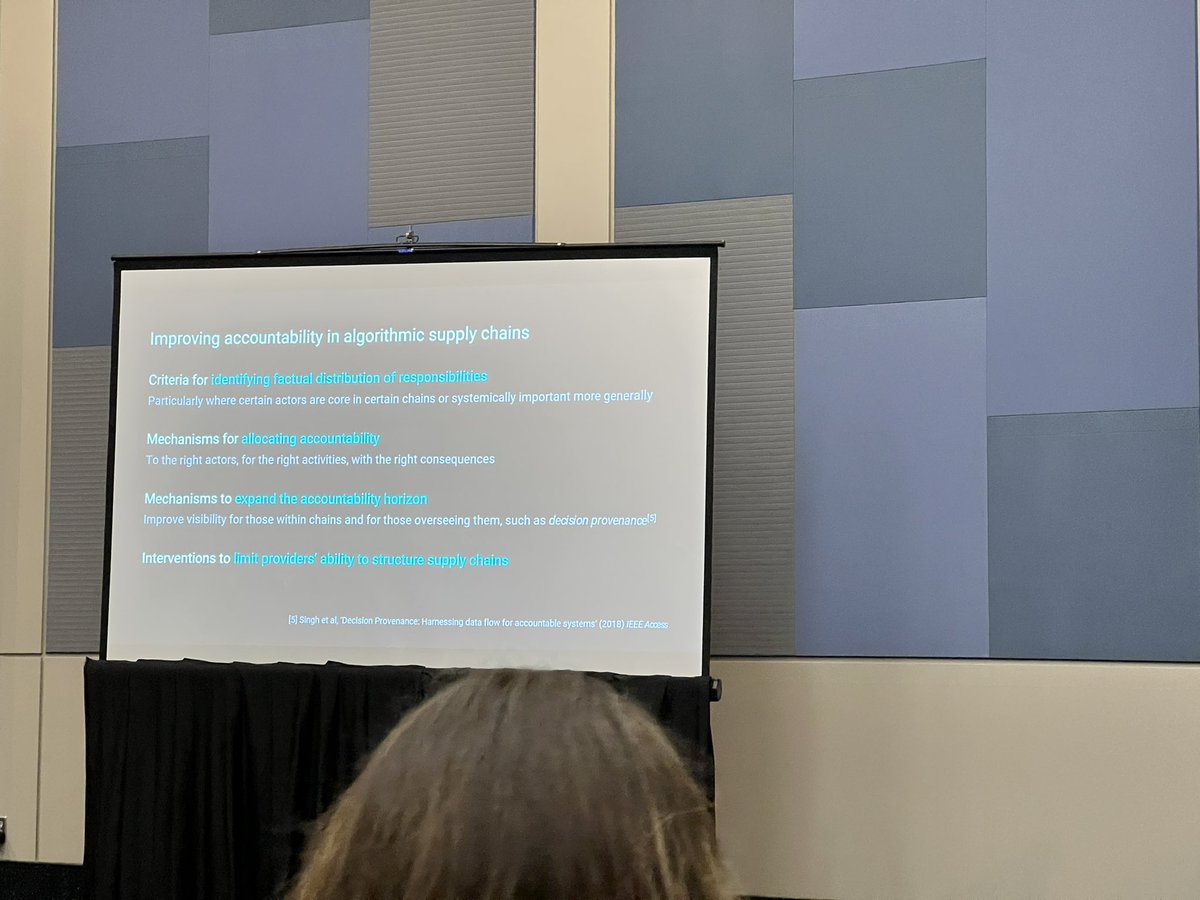

Very interesting #FAccT2023 talk by Jennifer Cobbe about a major blind spot in the AI auditing regulation landscape: algorithmic supply chains! dl.acm.org/doi/10.1145/35…