📢 Physics + GPs + inverse problems using #ProbabilisticNumerics 📢

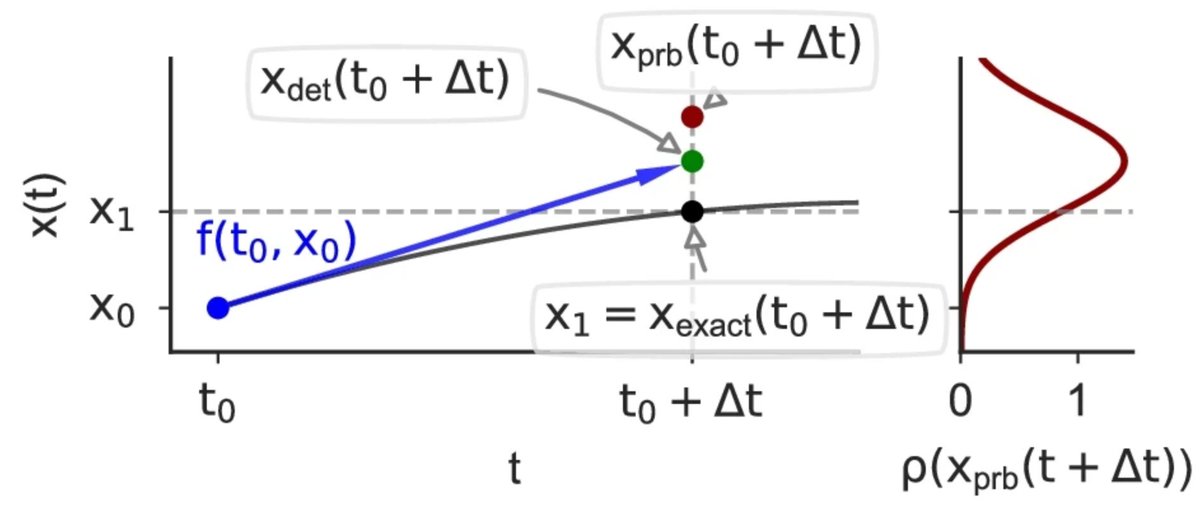

At #ICML2022 we show that probabilistic ODE solvers are not just fast, but also useful for solving inverse problems! Joint work with Filip Tronarp and Philipp Hennig. More below 🧵

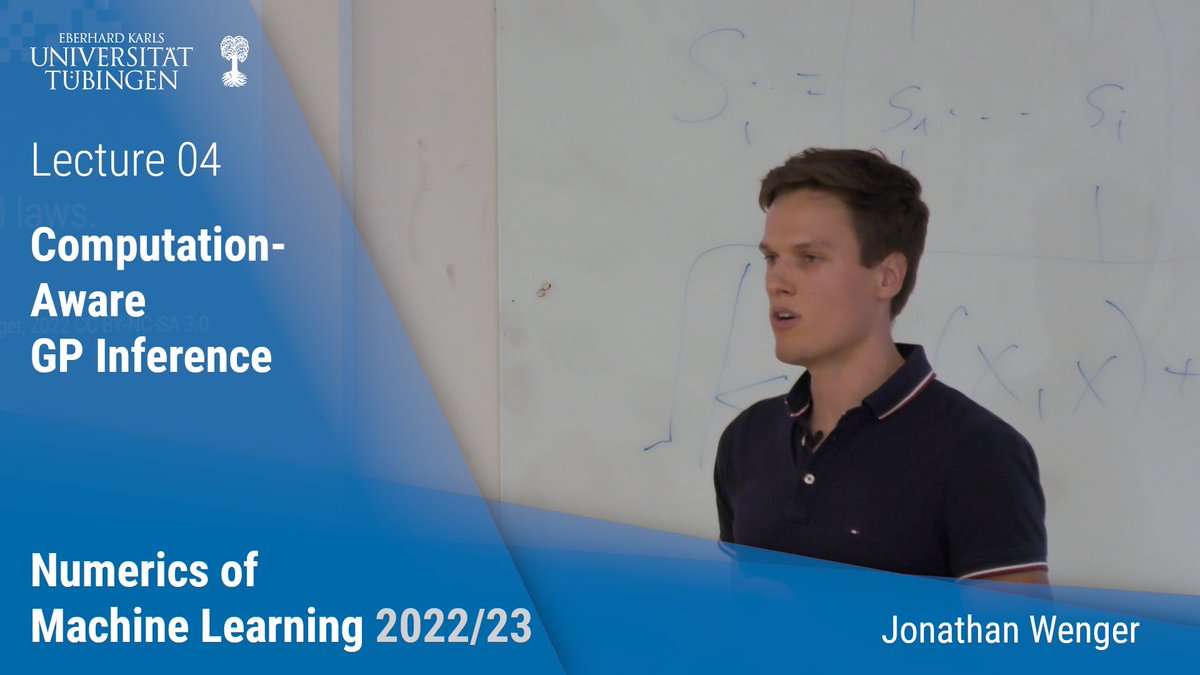

This term, my group is teaching a Master course on Numerics of Machine Learning. Naturally, from the perspective of #probabilisticnumerics .

Today we're releasing Lectures 1 (my Intro) and 2-4, which cover linear algebra. Here are links, and what to expect: 🧵

#ProbabilisticNumerics can help expressing and handling the uncertainty around the computations of modern #MachineLearning algorithms - a way to embrace the inherent imprecision!

Learn more in episode 88 with Philipp Hennig, a true expert on this topic!

this week we learn what #ProbabilisticNumerics is with Philipp Hennig from Universität Tübingen

The short answer: 'redescribing everything a computer does as Bayesian inference'.

Tune in for episode 88 to learn more🔍

computers, computations and the amount of data have evolved rapidly.🚀As a result, higher uncertainty around the results and procedure of an analysis were introduced. #ProbabilisticNumerics helps handle this challenge!💪 Tune in for ep. 88 to learn more from Philipp Hennig

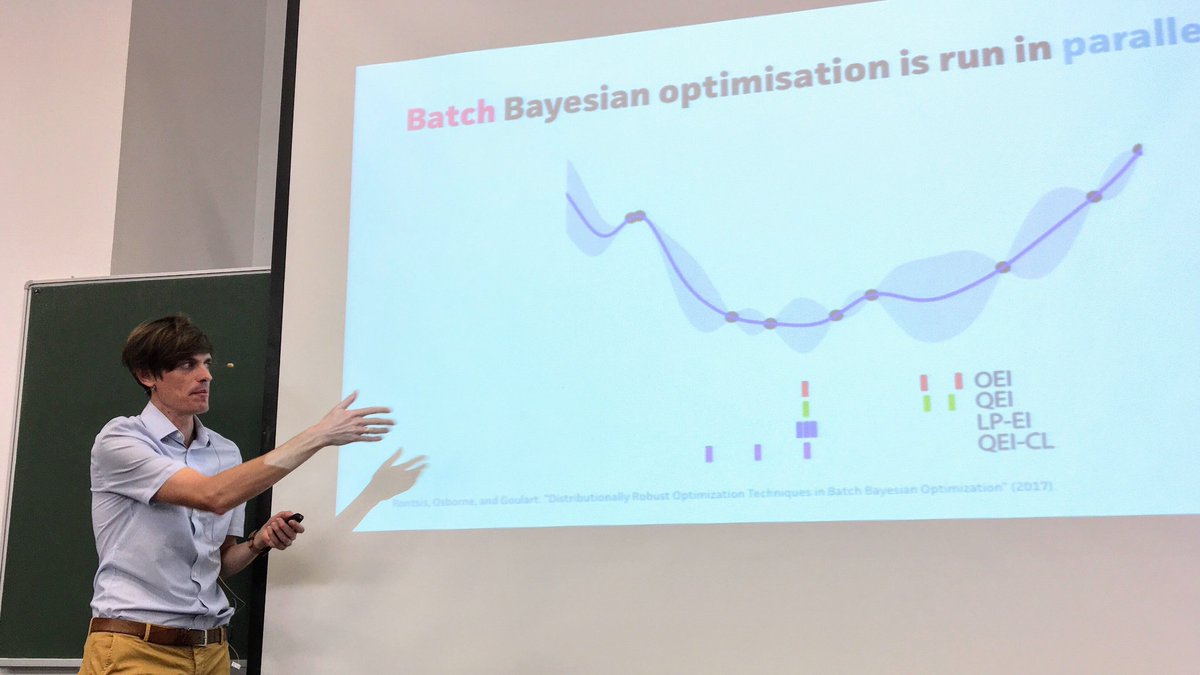

A great introduction to #ProbabilisticNumerics & #BayesianOptimisation by Michael A Osborne at #MLSS2018 . “Hyperparameters should be marginalised, not optimised”.

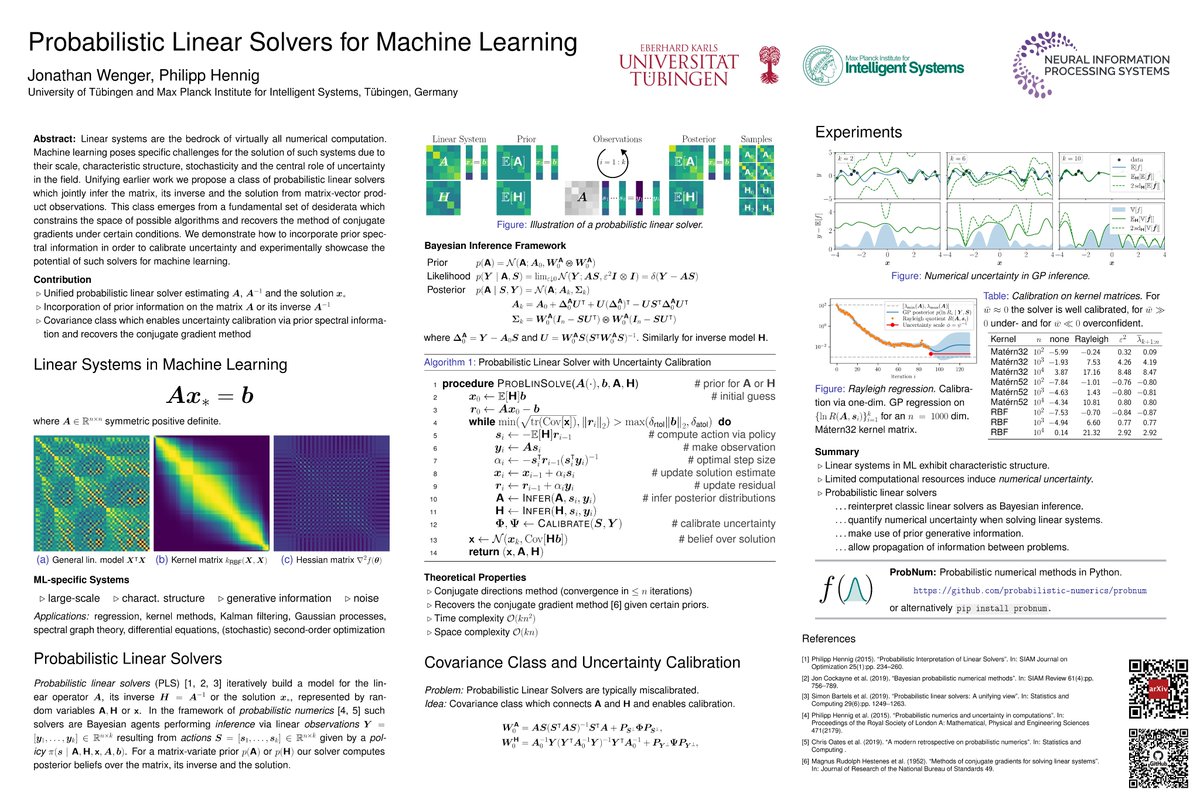

Come chat with us at our poster at NeurIPS Conference on Thursday at 6pm CET / 12pm EST tinyurl.com/problinsolve. Joint work w/ Philipp Hennig.

#probabilisticnumerics #NeurIPS2020

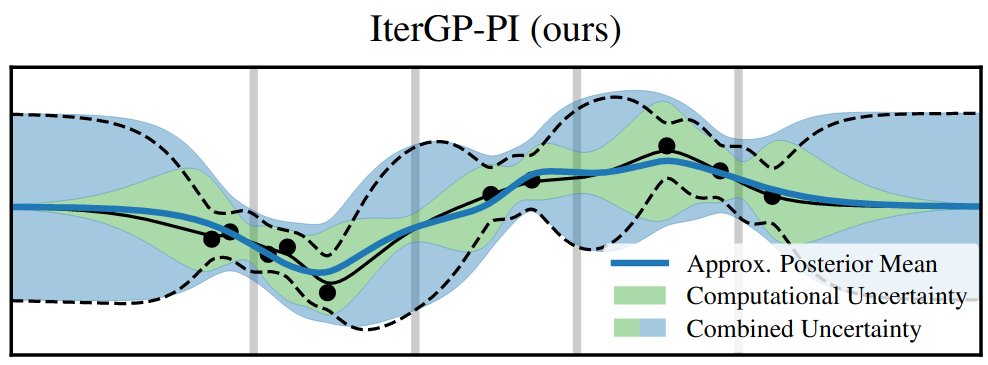

In the same way, that limited data induces uncertainty about the true function, so does limited computation! We quantify computational uncertainty using techniques from #probabilisticnumerics to improve uncertainty quantification.

new paper with @j_oesterle Philipp Hennig and Nico Krämer: we show that numerical uncertainty can affect simulations of common neuroscience models #probabilisticnumerics ML for Science

another week another episode!

In episode 88, Philipp Henning introduces us to the exciting topic of #ProbabilisticNumerics and how they revolutionise #MachineLearning !

You don't want to miss that! 😉

learnbayesstats.com/episode/88-bri…

PhD and postdoc positions in ML with Søren Hauberg at DTU www2.compute.dtu.dk/~sohau/positio… #machinelearning #ProbabilisticNumerics

🔜 Wednesday, 2pm GMT, #DCEWebinar

Probabilistic Numerics — Computation as Machine Learning

Philipp Hennig, Chair for the Methods of Machine Learning Universität Tübingen and Adjunct Scientist Intelligent Systems

🆓➡️ cambridge.org/core/journals/…

#MachineLearning #ML #ProbabilisticNumerics

Philipp Hennig talks about how the algorithmic side of #DeepLearning is way behind the dev. of models.

Next LBS episode, coming very soon...

#ProbabilisticNumerics #computation #inference

Follow the show: tinyurl.com/pvz4ekky

Support the show: tinyurl.com/2p8mpxnp

For batch active learning, how can the algorithm determine the batch size? Use computational uncertainty via kernel quadrature! Check out our new AISTATS paper with Michael A Osborne, Martin Jørgensen, Xingchen Wan, Vu Nguyen!🎉 #ProbabilisticNumerics

paper: arxiv.org/abs/2306.05843

Will be #ICML2022 this week. DM me if you want to meet and chat about #GaussianProcesses or #ProbabilisticNumerics !

Our second #ICML2022 paper:

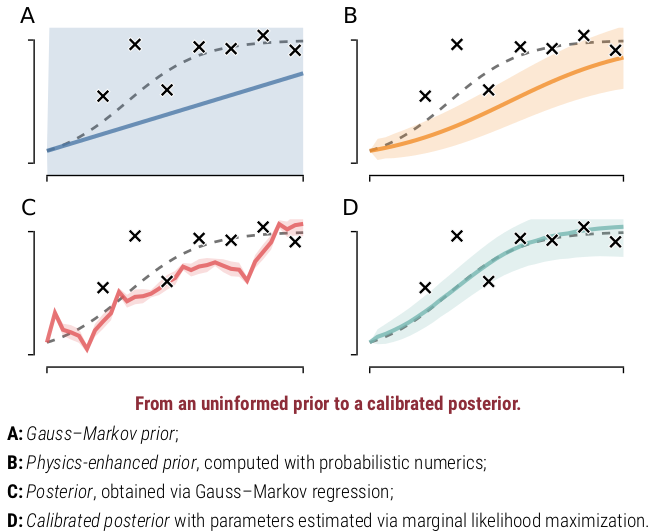

ODE filters — my favourite #probabilisticnumerics ODE solvers — fit a Gauss-Markov process _jointly_ to computational and empirical information.

So now, physics-informed learning (ODE learning) is just #GP hyperparameter inference. In #JuliaLang .

A Bayesian conjugate-gradient method (by Cockayne, Oates, Girolami): arxiv.org/pdf/1801.05242… #ProbabilisticNumerics

The next Data-Centric Engineering webinar:

Probabilistic Numerics — Computation as Machine Learning

Philipp Hennig, Chair for the Methods of Machine Learning Universität Tübingen and Adjunct Scientist Intelligent Systems

Wednesday, 1st December

cambridge.org/core/journals/…

#MachineLearning #ProbabilisticNumerics