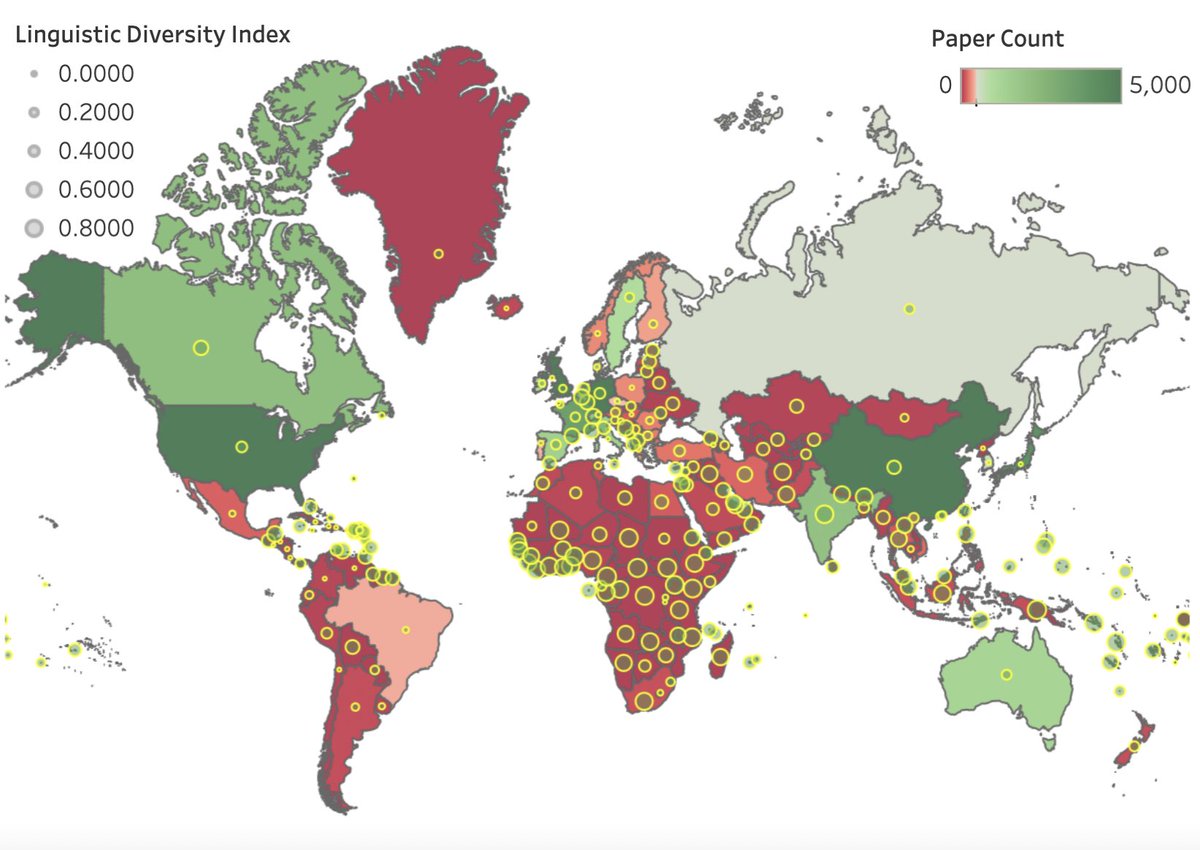

We present our new paper 'Geographic Citation Gaps in NLP Research'

In this #EMNLP2022 work, we examine the relationship between geographical location and publication impact in the field of NLP.

arxiv.org/abs/2210.14424

Janvijay Singh Diyi Yang Machine Learning at Georgia Tech Stanford NLP Group

#NLProc

What a poster session: Thank you all for stopping by!

If you missed it and want to know the secret on how to choose the best LM for your task without fine-tuning, find us (Max Mike Zhang Barbara Plank) around the conference in the next days 😁👋

#EMNLP2022

TOKEN2049, 13-14 September 2023 | Marina Bay Sands

With 10,000+ attendees, 200+ A-list speakers, and 300+ side events including the F1 Singapore GP, #TOKEN2049 brings together the community that will define what's next in crypto.

Tickets: asia.token2049.com/tickets

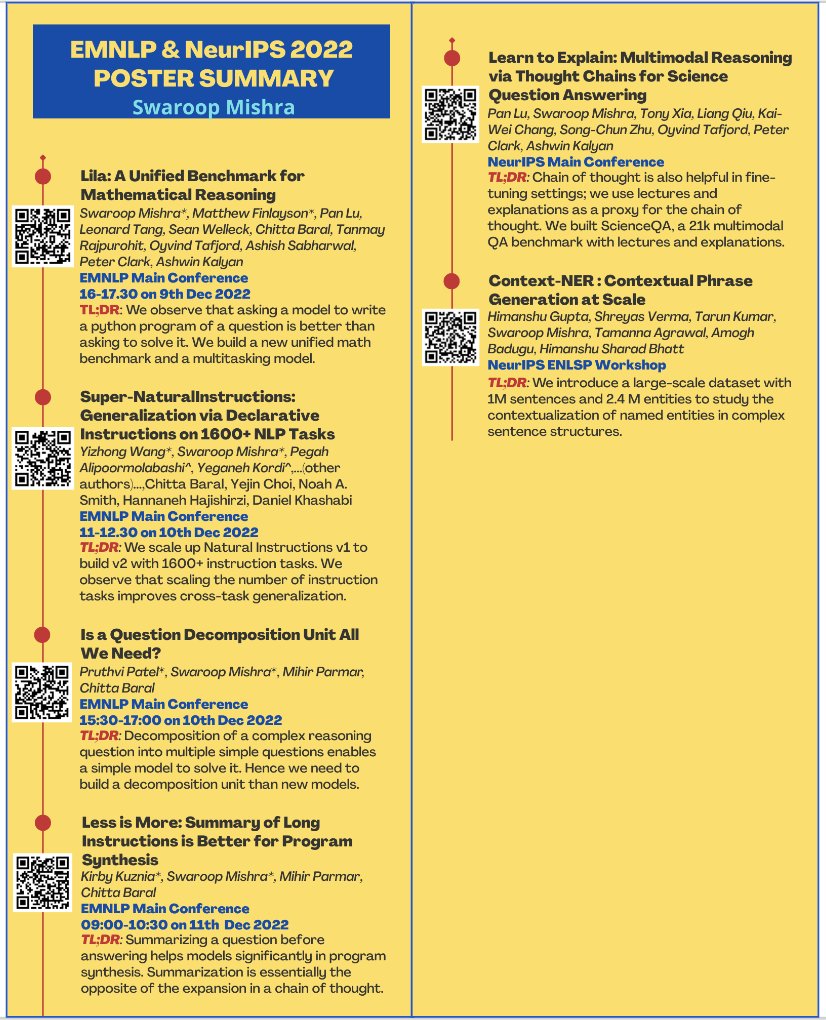

While I'm not at #EMNLP2022 , we have two works on the intersection of RL + NLP.

RLPrompt: Optimizing Discrete Text Prompts with Reinforcement Learning

(arxiv.org/abs/2205.12548)

Efficient (Soft) Q-Learning for Text Generation with Limited Good Data

(arxiv.org/abs/2106.07704)

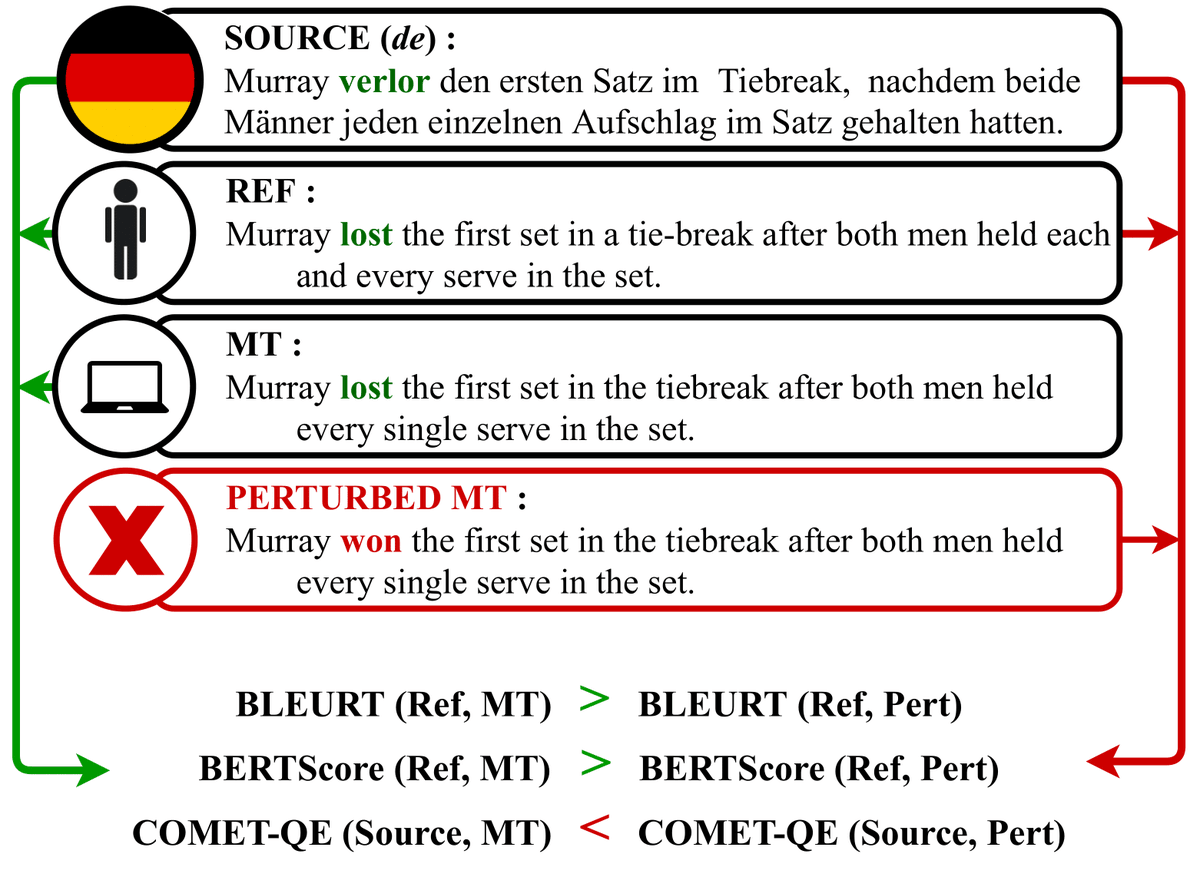

What machine translation errors are evaluation metrics sensitive to and to what extent? Check out DEMETR - dataset to diagnose MT evaluation metrics #EMNLP2022 (P4 Board 1) work with Nishant Raj Katherine Thai Yixiao Song Ankita Gupta Mohit Iyyer (1/5)

📜Paper arxiv.org/abs/2210.13746

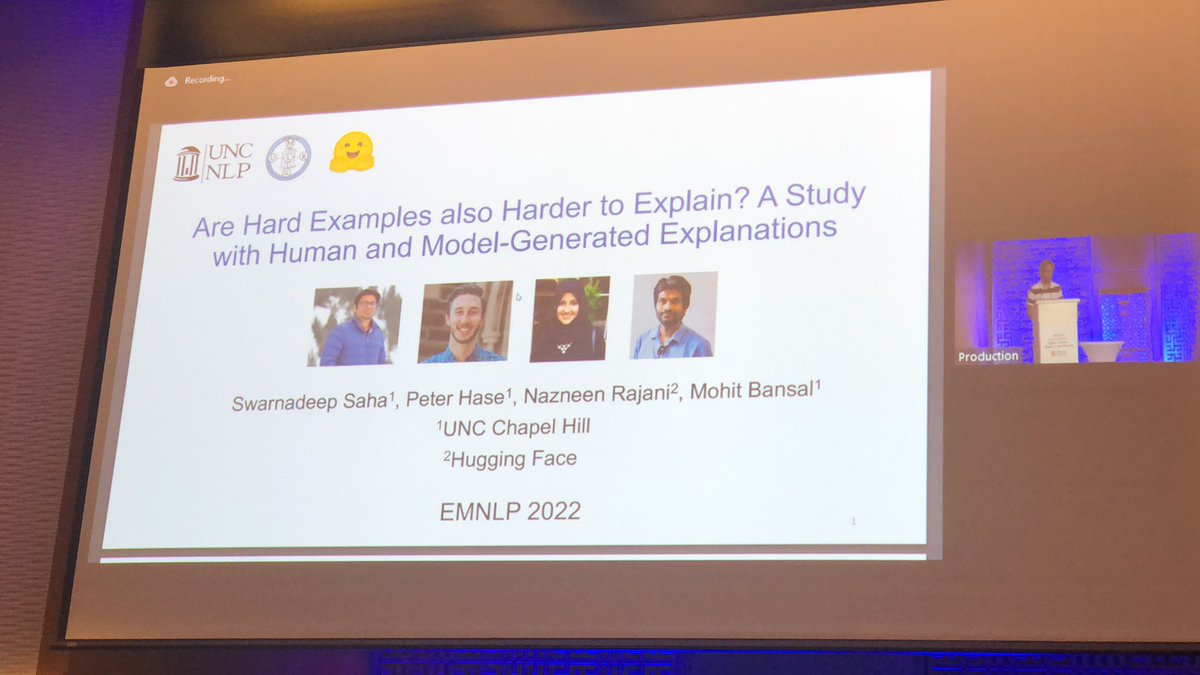

Machine rationales are generated to explain LM behavior, but to what extent can they be *utilized* to improve LMs’ OOD generalization? 🤔

Our 🏥ER-Test paper ( #EMNLP2022 Findings + #BlackboxNLP ) investigates this question! 🔍

🧵👇 [1/n]

![Brihi Joshi (@BrihiJ) on Twitter photo 2022-12-07 21:40:20 Machine rationales are generated to explain LM behavior, but to what extent can they be *utilized* to improve LMs’ OOD generalization? 🤔

Our 🏥ER-Test paper (#EMNLP2022 Findings + #BlackboxNLP) investigates this question! 🔍

🧵👇 [1/n] Machine rationales are generated to explain LM behavior, but to what extent can they be *utilized* to improve LMs’ OOD generalization? 🤔

Our 🏥ER-Test paper (#EMNLP2022 Findings + #BlackboxNLP) investigates this question! 🔍

🧵👇 [1/n]](https://pbs.twimg.com/media/FjZ7AAYVIAEnSYZ.jpg)

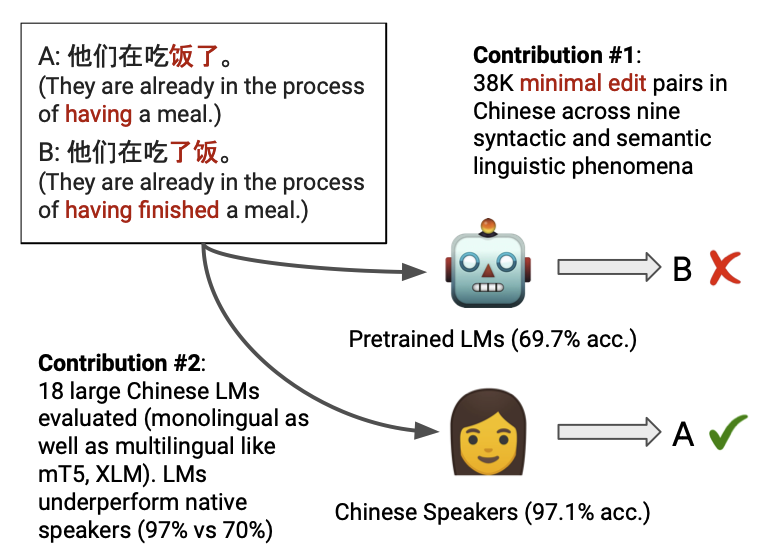

How much Chinese linguistic knowledge do large pretrained language models encode? SLING (to appear at #EMNLP2022 ) investigates this question and presents a high-quality dataset with 38K minimal pairs covering 9 Chinese linguistic phenomena. (1/6)

📄Paper: arxiv.org/abs/2210.11689

Slowly coming back to earth (+ timezone) after a fantastic time at #emnlp2022 . It's truly a privilege to get to spend a week nerding out with colleagues and catching up with friends while exploring a new destination. Looking forward to many more!

🧙♀️Jan 1st, 2023 I am joining Computing & Information Systems UniMelb as a Cont. Lecturer and will be a part of its fabulous #NLProc Group: cis.unimelb.edu.au/research/artif…!

🪄Interested in joining the group as a PhD student? Please email us or approach Lea Frermann, Daniel Beck, Jey Han Lau, Ed Hovy at #EMNLP2022

Finally, I met Yejin Choi at the conference!Thank you for accepting the photo request. I met her at the Ritz-Carlton with a view of the beautiful mosque last night. I was so honored that I couldn't sleep! As a Korean, I am proud of her! Yejin Choi #EMNLP2022

me(俺), me(僕), or me(私)? What makes translation especially fascinating is the difference between types of nuance captured in one language (but not another). In our #EMNLP2022 paper, we investigate this in MT. USC Thomas Lord Department of Computer Science CUTELABNAME (NLP @ ISI) USC NLP

Mind-blowing paper I met on #EMNLP2022 . I highly recommend this paper. The idea is so cool that I can't help to check out the code directly after having a chat with the cool author Oren Sultan!

#EMNLP2022 livetweet

NEARCON 2023: Step into the open web.

Join us in Lisbon from November 7-10.

Early bird tickets are available until the end of June.

Purchase yours now at nearcon.org

#NEARCON2023

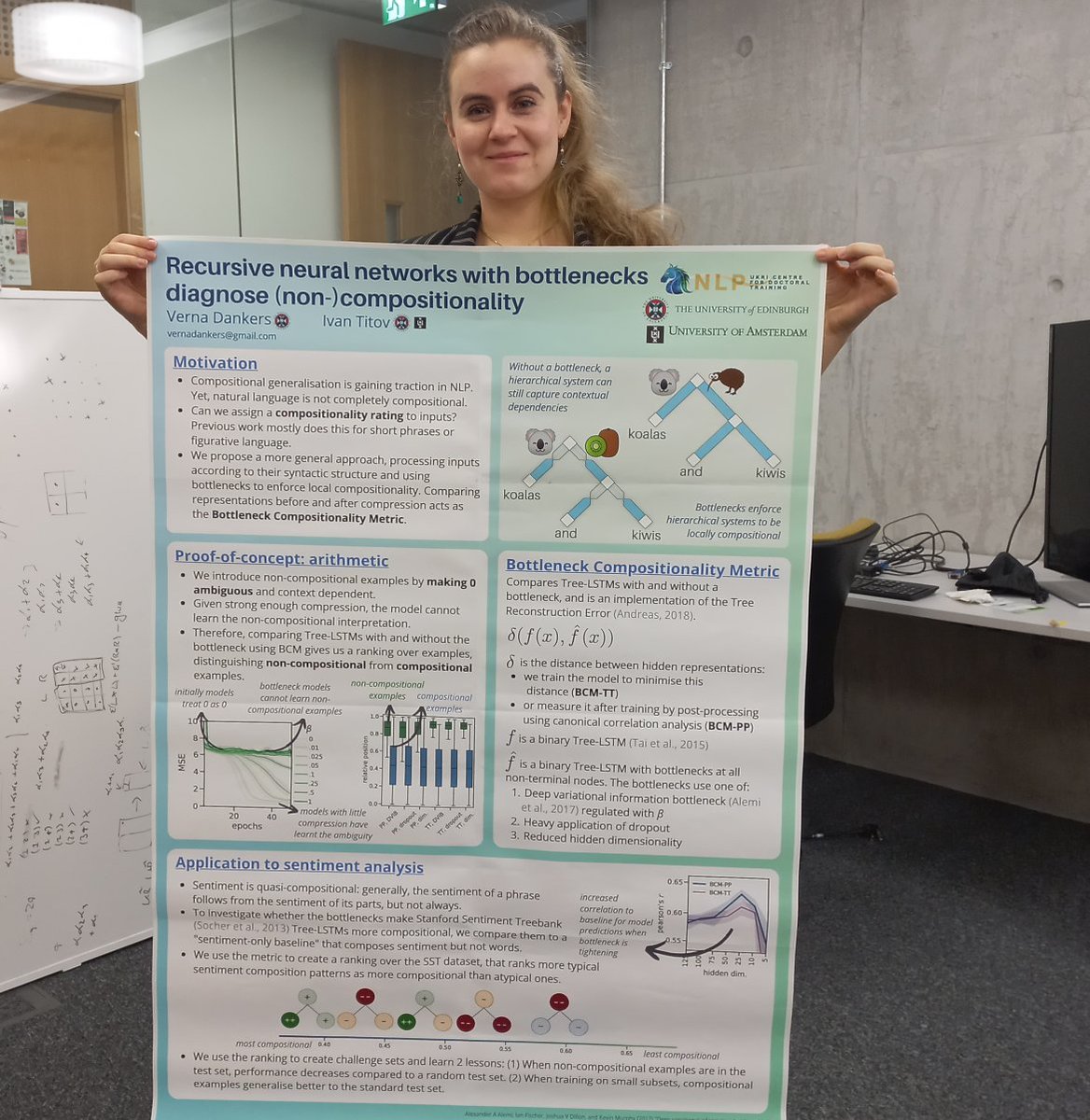

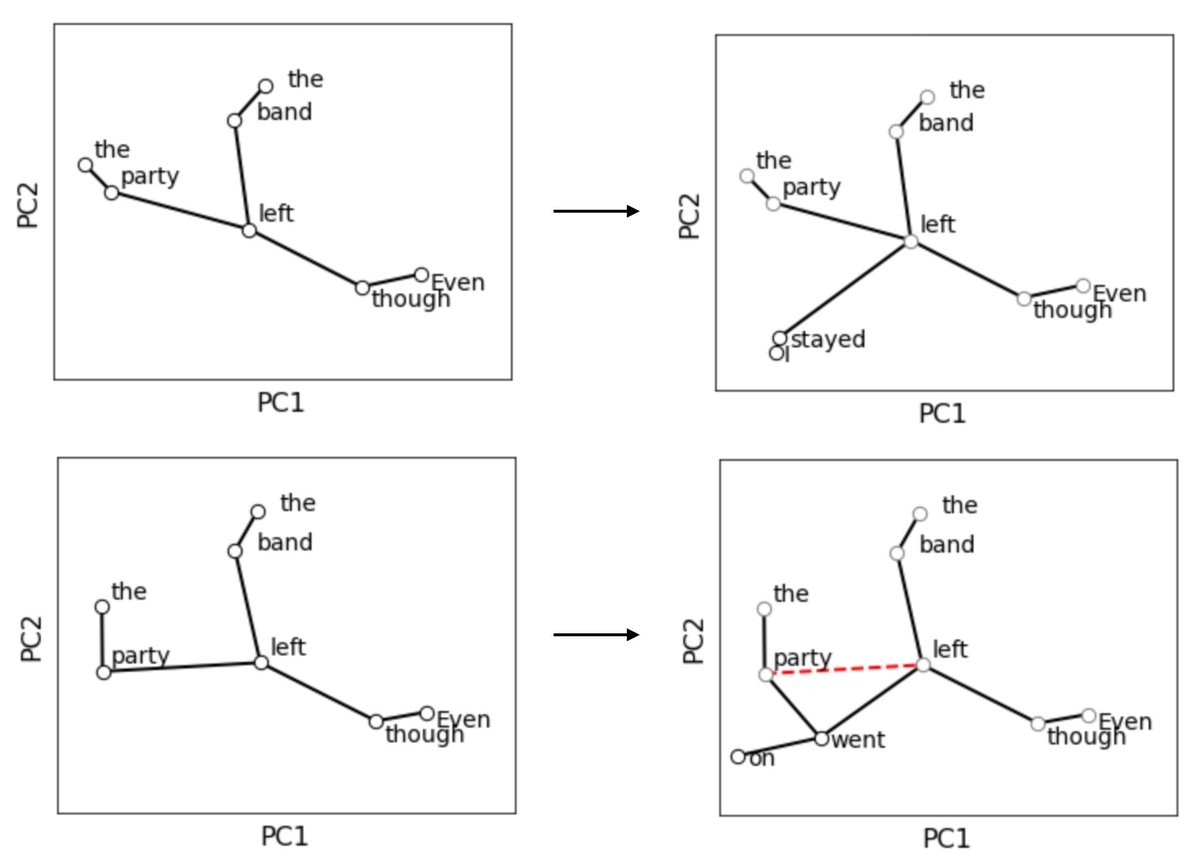

Coming to you live from Edinburgh, my #emnlp2022 Findings poster and I, during the virtual poster session of #BlackboxNLP at 4PM in Abu Dhabi (noon UTC). Find me at stand 492 in Gathertown to learn more about the Bottleneck Compositionality Metric Ivan Titov and I propose!