A Round-up of 20 Exciting LLM-related Papers by Sebastian Ruder

Sebastian has done an incredible job in sifting through 3586 papers to bring us a curated selection of 20 standout #NLP papers from #NeurIPS2023

Here's a quick glimpse into the main trends that are defining the future…

Join our mentorship session on writing a system description paper for AfriSenti-SemEval 2023

Idris Abdulmumin Idris Abdulmumin Seid M David Ifeoluwa Adelani 🇳🇬

Sebastian Ruder Meriem Beloucif

Ibrahim Said Ahmad, PhD Salomey Osei Oumaima Hourrane

Saif M. Mohammad

🆕 The End of Finetuning

latent.space/p/fastai

'The right way to fine-tune language models... is to actually throw away the idea of fine-tuning. There's no such thing. There's only continued pre-training.'

— Jeremy Howard, who created ULMFiT with Sebastian Ruder back in 2018!…

Here is a great resource to understand how Gradient Descent works and learn about many of its variations:

ruder.io/optimizing-gra… via Sebastian Ruder

And it's completely FREE.

People claiming know how #LLM fine-tuning (and, more recently, RLHF) work should look backwards and have a read to Universal Language Model Fine-tuning (ULMFiT) by Jeremy Howard and Sebastian Ruder

arxiv.org/abs/1801.06146

For some reason, the only memories from last year’s Deep Learning Indaba I found was this video of me complaining about the sun😅 and having a chat with Sebastian Ruder

If you took pictures last year, share your favorite memories!👇

See you in Ghana!🥳

Really enjoyed attending the #emnlp2023 Big Picture workshop, which introduced a presentation format where two presenters would jointly present (their contribution to) answering a research within #NLProc

Here’s a summary by Sebastian Ruder; slides will be up on bigpictureworkshop.com

Yay, it's out! Excited to share our benchmark for evaluating under represented languages! Work led by the amazing Jonathan Clark and Sebastian Ruder 😄

This is truly useful ~ Understanding Large Language Models -- A Transformative Reading List

#GenerativeAI #LLM #Transformer #RLHF Sebastian Raschka Melanie Mitchell Sebastian Ruder Ather Fawaz Davis Blalock Devansh: Machine Learning Made Simple

sebastianraschka.com/blog/2023/llm-…

🧗 excellent Big Picture Workshop closing remarks w takeaways from each session and a reminder to connect papers within the big spiral, intertwined staircase that is research 🙏🙏🙏 Yanai Elazar Allyson Ettinger Nora Kassner Sebastian Ruder Noah A. Smith

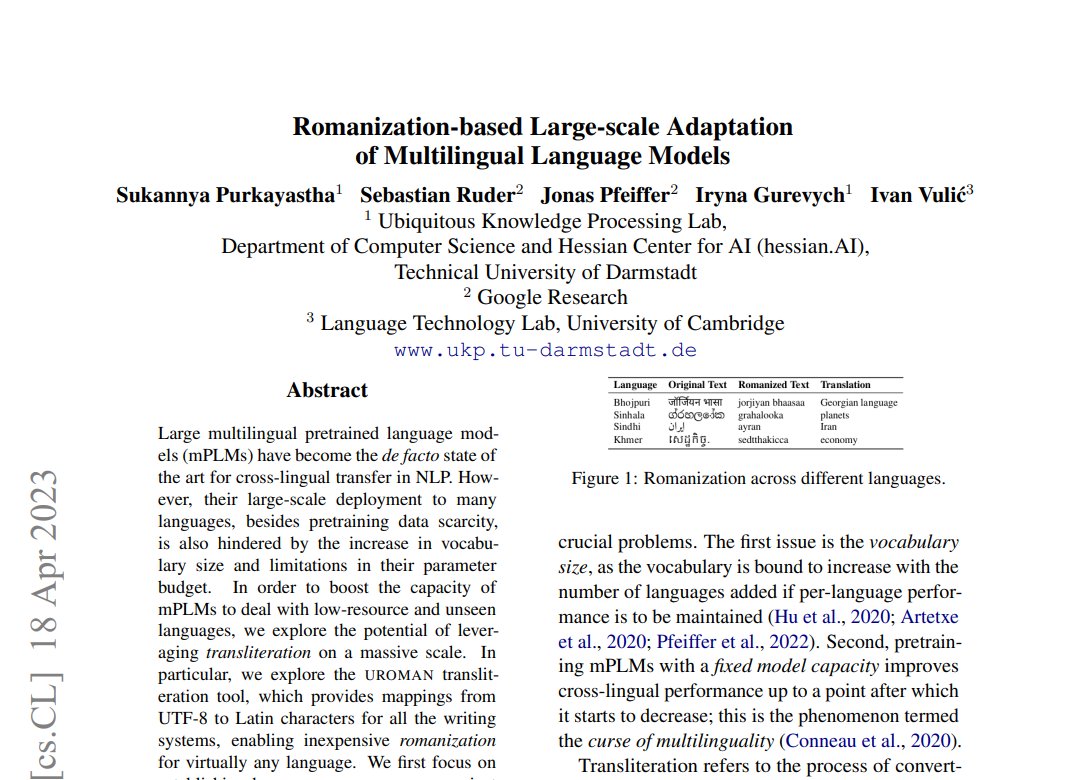

You can find our paper here:

📃arxiv.org/abs/2304.08865

Consider following our authors Sukannya Purkayastha (সুকন্যা পুরকায়স্থ), Sebastian Ruder (@GoogleAI), Jonas Pfeiffer (@GoogleDeepMind), Iryna Gurevych, and Ivan Vulić (@CambridgeLTL) (9/🧵)

See you in 🇸🇬! #EMNLP2023

Wolfram Ravenwolf Yann LeCun cohere ‘s secret sauce is that Sebastian Ruder breathes multilingual

Language is an important part of our culture, so how do we ensure that AI represents all our cultures?

Join our panel w/ Sebastian Ruder & Felix Laumann to learn the challenges, and opportunities in building inclusive language technologies.

Register: lanfrica.com/blog/building-…

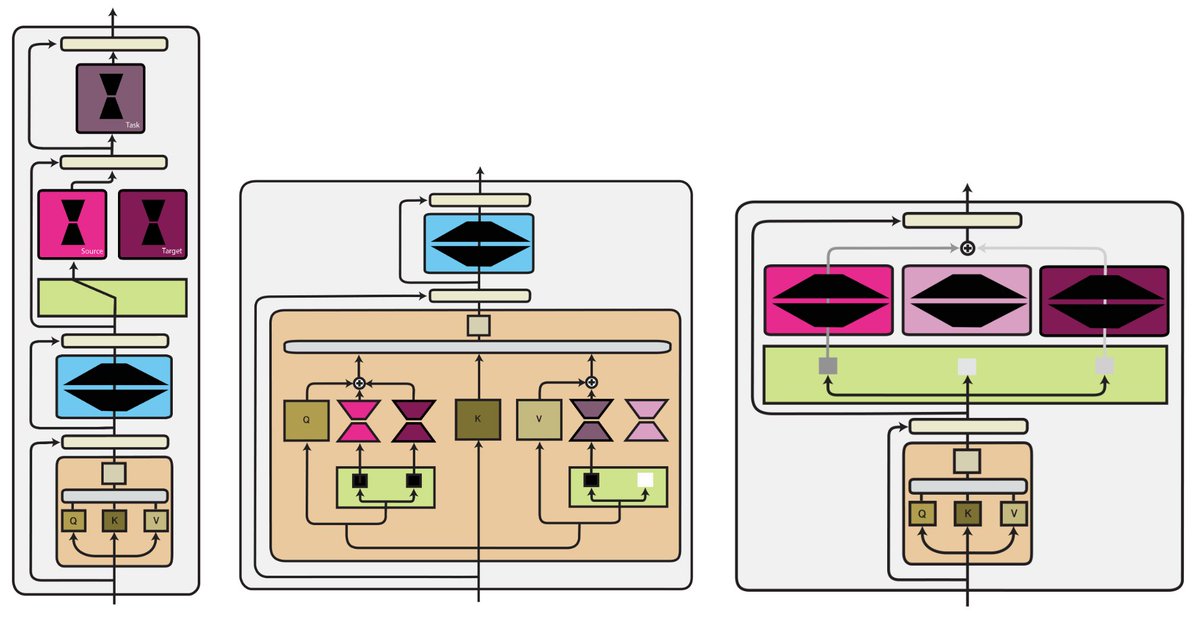

Modular #DeepLearning

👇

An overview of modular deep learning across 4 dimensions

👇

Computation function

Routing function

Aggregation function

and Training setting

👇

buff.ly/3Ro2JES by Sebastian Ruder

#AI #MachineLearning #Sustainability

Modular DL will enable more…

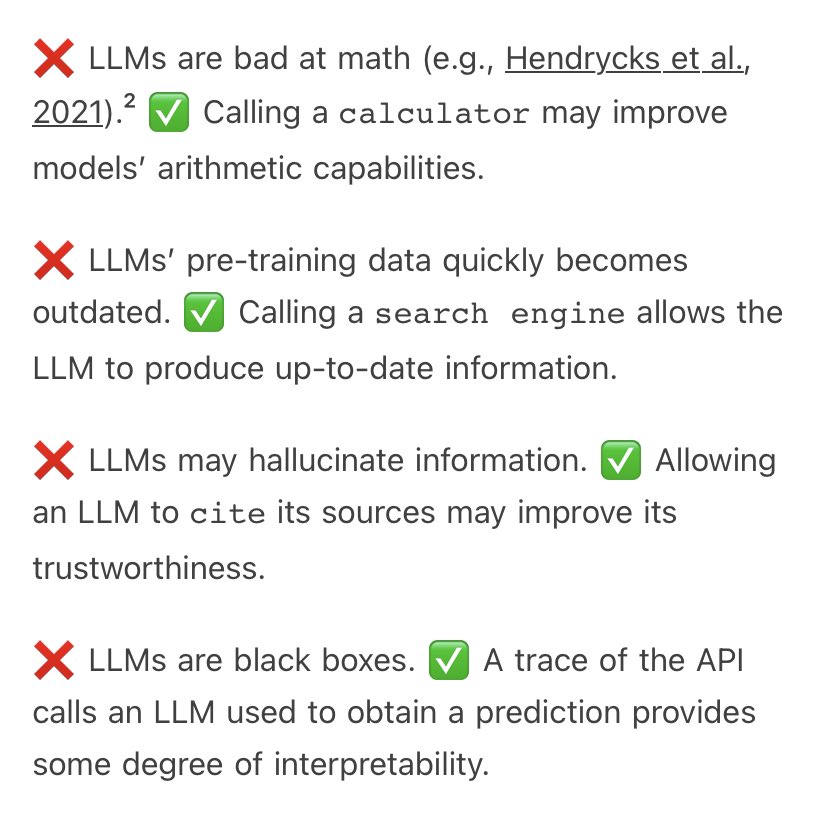

From Sebastian Ruder’s NLP News - Exploring tool use

Language models “are limited to producing natural language, which does not allow them to interact with the real world. This can be ameliorated by allowing the model to access external tools—by predicting special tokens or…

The Big Picture workshop @ EMNLP23 is just one week away, and we (Allyson Ettinger, Nora Kassner, Sebastian Ruder, Noah A. Smith) have an incredible program awaiting you!

bigpictureworkshop.com